The unbelievable weakness of identification/authentication, bank edition

So I am in the process of opening a new bank account + credit card with my existing institution. And I call to check on the status of the credit card. And the call goes like this: ring ring press 1 for english, 2 pour francais type in your 16-digit card number what is the…

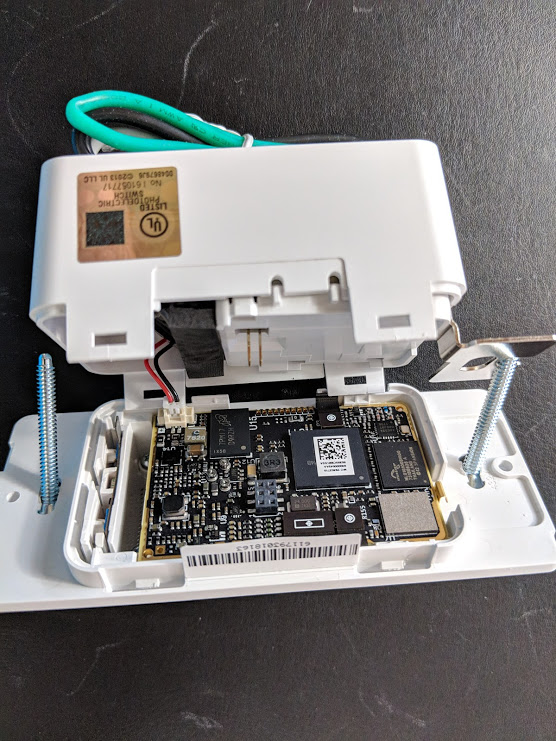

The smarts inside the smart switch

There’s an old joke, something about a kid getting a drumset for christmas and dad giving the kid a knife and saying “ever wonder whats inside your new drumset?”. Well, you must be dying to know whats in your new Ecobee Switch+. And I can’t believe I forgot to add it to the previous post.…

Smarten-up you wall switch! The Ecobee Switch+ (with various assistants)

So I received my shiny new ecobee Switch+. It takes one of your old stupid wall switches and smartens it up. Its what you need! The ecobee Switch+ integrates with Google/Amazon/Apple/Samsung/IFTT/… Its got a little microphone/speaker, and regular old button to turn on/off. Who doesn’t want their wall talking to them? You need to make…

Advertisement traffic is >=40% of bandwidth

OK, you might be the last person standing w/o running an Adblocker. But did you know that up to 40% of your bandwidth is going to those ads? Read this fairly simple study from SFU. Now, depending on the tariff you are on (worst case being pay-per-byte, best case being unlimited) this means that up…

The curious case of the door latch, or, is there anything a rotary tool won’t solve?

OK we’ve all been there. That interior door that doesn’t latch too reliably. In our case, on the master bath. When a house gets middle-aged it, like me, gets a little crooked (squish as they say out east) in places. And, this causes a set of shimming and trimming to cause things to come back…

Long Strange Trip