Logging. Its the modern day tower of Babel.

So I’m using an EFK (Elasticsearch, Fluent, Kibana) stack for log management. Its a common combination (don’t argue with me that logstash is better, its merely different). And as I’ve wandered down the rabbit hole of trying to get semantic meaning from log messages, I’ve learned a lot, and I’ll share one of those tips with you.

So first, the problem domain. You have a fleet of well orchestrated containers, grouped into Pods, run from replication controllers, grouped under namespaces in Kubernetes. Its a big fleet, things come and go all the time and everyone is happy with that. But, they have needs. Audit: is someone hacking me? DevOps: is there a bug we haven’t found that is causing crashes that are hidden by the restart mechanism? etc.

Now, log messages were usually designed to be read by the person who wrote the code on the hour it was written. They are very hard to assign meaning to generally.

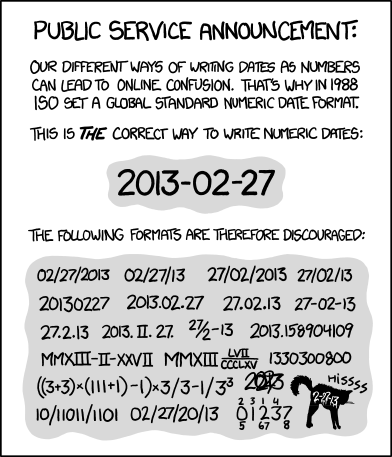

The first problem is timestamps. For some reason not everyone got the memo about ISO8601. Stop with the “abbreviation of month in obscure language”_day-in-roman-numeral/year-of-the-pig type thing. Its YYYY-mm-dd. So you have to normalise a bunch of backwards dates. OK, no problem, we got this.

The next problem is the messages. Some are in JSON, some are raw-text. That raw-text might be 7-bit clean ASCII. It might be UTF-8. Maybe it has single quotes or semi-colons, so make sure you don’t get a ‘little bobby tables’ problem here.

Now we get to the real issue. The developer might have put: “Ooops. This shouldn’t occer<sic>. ERR: 5762” Or they might have e.g. an Nginx or apache-style log with the user-agent, url, etc, delimited by a weird sprinkling of spaces, quotes, and square brackets. And you want to extract the fields to make them searchable (URI, CODE, METHOD, …). What to do?

Well, enter fluent-bit. It runs pretty much anywhere, including as a DaemonSet in your Kubernetes cluster, watching all the logs go by. If you read the user-guide, the source-code, and use the force, you can add some semblance of meaning to this highly-entropic log world.

One of the tools that fluent-bit has is the ‘annotation’. This allows you to say: “fluentbit.io/parser: foobar”, and then have a foobar regex. Great. But… what about Istio? Istio uses a mutating web-hook to inject what are called sidecars (a 2nd container in your Pod, bolted to the first). Since in Kubernetes annotations go at the Pod level, not the container level, you can’t simply add some magic as you install Istio (side note, my pull-request to Istio was accepted, so the helm chart now has these annotations settable for e.g. the ingressgateway). Istio uses a tool called ‘envoy‘ to act as its dataplane, and logs messages in a custom format, and you can parse it with this mouthful (don’t try to pronounce this):

^(?<host>[^ ]*) - \[(?<real_ip>[^ ]*)\] - (?<user>[^ ]*) \[(?<time>[^\]]*)\] "(?<method>\S+)(?: +(?<path>[^\"]*?)(?: +\S*)?)?" (?<code>[^ ]*) (?<size>[^ ]*) "(?<referer>[^\"]*)" "(?<agent>[^\"]*)" (?<request_length>[^ ]*) (?<request_time>[^ ]*) \[(?<proxy_upstream_name>[^ ]*)\] (?<upstream_addr>[^ ]*) (?<upstream_response_length>[^ ]*) (?<upstream_response_time>[^ ]*) (?<upstream_status>[^ ]*) (?<last>[^$]*)

OK, that was easy. But how do we teach fluent-bit to apply this regex to the istio-proxy container (only)?

Well, first I added some code to fluent-bit (its all in the upstream now in various pull-requests and merges) to allow one to specify the container (and stream) in the annotation. But this still didn’t help since the side-car injection ends up with no annotation.

So then I dug into the tag mechanism. And here is my solution (note it relies on a Pull Request not yet merged).

[INPUT]

Name tail

Path /var/log/containers/*.log

Parser docker_no_time

Tag kube.<namespace_name>.<pod_name>.<container_name>

Tag_Regex (?<pod_name>[a-z0-9]([-a-z0-9]*[a-z0-9])?(\.[a-z0-9]([-a-z0-9]*[a-z0-9])?)*)_(?<namespace_name>[^_]+)_(?<container_name>.+)-

Refresh_Interval 5

Mem_Buf_Limit 5MB

Skip_Long_Lines On

[FILTER]

Name kubernetes

Match kube.*

Kube_URL https://kubernetes.default.svc:443

Kube_CA_File /var/run/secrets/kubernetes.io/serviceaccount/ca.crt

Kube_Token_File /var/run/secrets/kubernetes.io/serviceaccount/token

Merge_JSON_Log On

K8S-Logging.Parser On

K8S-Logging.Exclude On

Regex_Parser kube-tag

[FILTER]

Name parser

Match kube.*.istio-proxy

Key_Name log

Parser envoy

So what this does is first parse the container raw logs (which are in docker format, e.g. { time: x, log: y }. As part of the tail input I apply a regex to create the tag (this is not documented anywhere, this is from code inspection in fluent-bit) such that you end up with a tag of ‘kube.namespace.pod.container’. This allows later route matching.

I then run the Kubernetes filter across all kube.*. This uses the Kubernetes API for enrichment, etc, and runs any parsers suggested by the annotation. For the istio-proxy container there is no suggested parser, so it does a Docker ‘decode_as’ which unescapes strings etc, but otherwise leaves the text in ‘log’.

I then run a regex-based parser on kube.*.istio-proxy, e.g. all the istio-proxy named containers. And this unpacks the strings and goes on with life.

Sounds easy in this write-up. It wasn’t. Enjoy.

Leave a Reply