Recently some miscreants broke into an Ontario local health network (Care Partners) and made off with… a lot. And now they are asking for ‘ransom’. Is this unique? No, there have been a lot of similar breaches recently, probably far more than go public (many companies probably pay the ransom quietly). What can one do, when faced with the challenge of defending valuable data while not being inherently an IT defence specialist?

Wikipedia has a list of military strategies for defence. The most commonly used one in cyber defence is ‘choke point’ (usually called perimeter). In this article by the CBC, there are some quotes that caught my eye:

“Encryption is one piece of the puzzle,” said lawyer Mary Jane Dykeman, a partner with the Toronto-based boutique firm DDO Health Law. “But it’s also possible that you hold information in a repository or in a system where, in and of itself it’s not encrypted, but you have a secure perimeter, if you will. You have a fence around it that people can’t just walk through.”

Well yes, if you can have a secure perimeter, it does make it simpler. You muster all of your defence strength at one spot that the enemy is forced to come through (choke point). But what if they are not forced to come through that spot? This leads rise to a different set of strategies. Why not also employ ‘defence in depth‘? You know they are going to get in, wear them down a bit, make them spend some time, and hope you can spot them.

But here we diverge from the traditional military methodologies and start thinking about another great defence mechanism, our immune system. Think of our skin as the ‘firewall’. Outside bad, inside good. It keeps bacteria from killing us. But, interestingly, the human body has evolved such that that is not the only mechanism. It turns out you can get a cut, let some bad stuff in, and (usually) not die. Why? Because there is white-blood-cells, they go to work after the first breach, attach themselves to the attackers, learn their weakness, cluster around the things that need protecting. Its kind of like having a distributed perimeter, a defence in depth.

Here the company made a mistake. Data at rest was not encrypted. That would have mitigated the damage. They made a second mistake, some weak passwords. They made a third mistake, some old, vulnerable software. It is easy to say “they need to become better human beings, mistakes are unacceptable”. Yes, that might be true, but will it really prevent this from occurring again? Maybe we need an elastic defence system that accounts for human and system frailty, one that uses multiple points with different strategies.

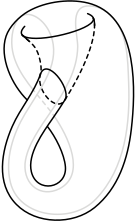

Thinking back to that quote above, the secure perimeter. This works when you have a vault with a single door. But what if you are served in a cloud? You’ve made the jump, you’re in (insert Hyperscale Infrastructure as a Service provider name here) cloud, instances everywhere, orchestration, microservices, etc. Where is the ‘door’? What is ‘outside’ versus ‘inside’? Its more like a Möbius strip, or perhaps a Klein bottle. All sides are inside, all sides are outside. How do I make a secure perimeter when I don’t know what the sides are, where the trust boundaries lie? And speaking of perimeters, we often think of them as outside to inside. What about the great egress? Perhaps we need to make some security in the *outbound* direction for the unlikely but happening case of covert exfiltration, getting that data back to the miscreants above.

So, we could think of the problem as:

- Parts of the defence will get breached (don’t go all in on a single strategy)

- Reduce the ability to go from the ‘weakest link’ to the strongest-link (east-west security)

- Reduce the value of the data obtained (encryption at rest)

- Reduce the likelihood of getting the data back out (south-north security, egress firewall)

- Reduce the amount of data that is ‘together’ (sharding, segregation).

- Create some breadcrumbs to facilitate catching/convicting the thieves (poison a bit of the data, make some fake records that trip alarms later, like the die packs in money)

- Increase the amount of time, energy the attackers need to spend once in (tarpits, fake targets, etc.)

- Reduce the reachability once inside (system A should only talk to system B, make that the case, just because A is trusted doesn’t mean you don’t need to prevent it from getting to C).

The real value in data like this is the ‘upsell’. A lot of (spear)phishing attacks (like the current CRA scams) are dramatically more likely to succeed with a single ‘fact’ that the victim can’t imagine anyone having. Your SIN, your health-care provider name, your address, last 4 digits of phone number. Scammers can take low-level ‘who would care’ data and upsell it into ‘here’s a new house for me’, convincing us. Don’t fall for it.

Leave a Reply